### Harvard’s Tiny Marvel: The Future of Quantum Computing in a Chip

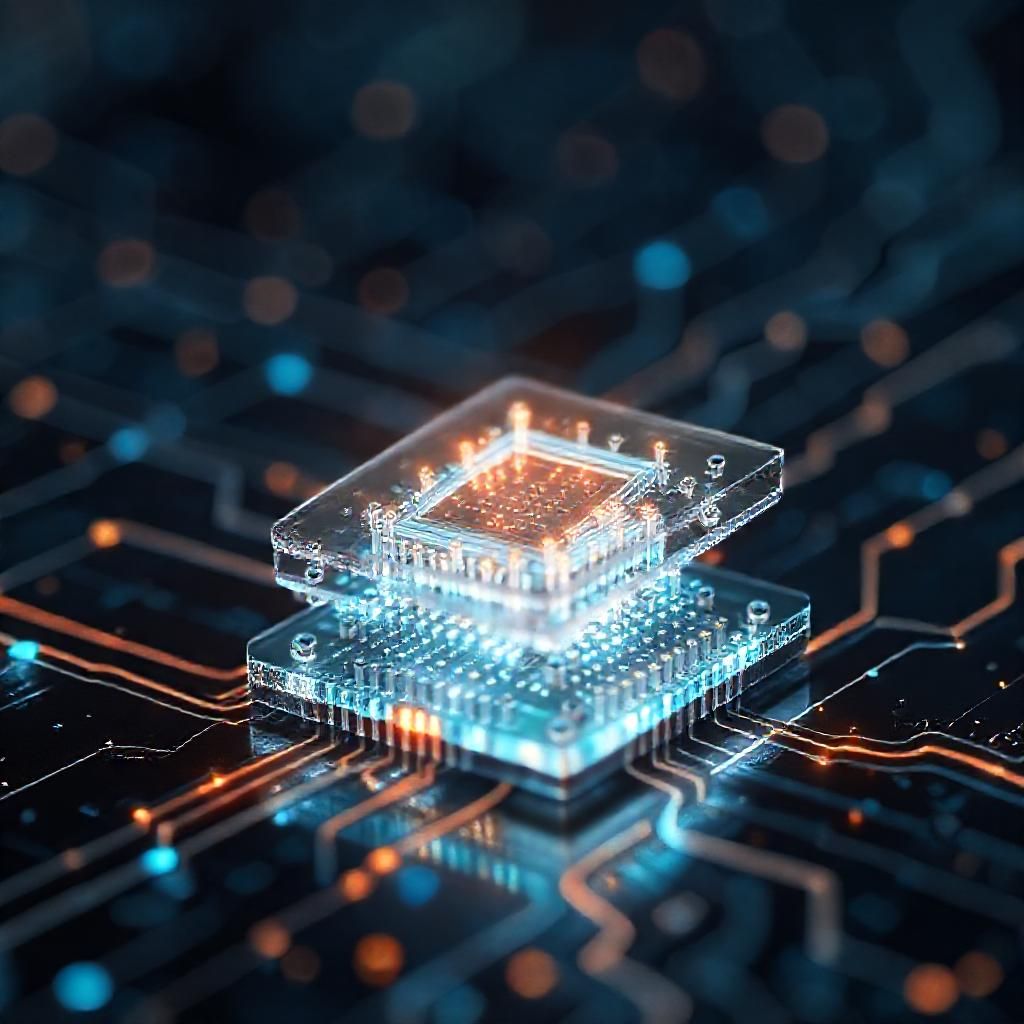

Imagine a world where the immense power of quantum computing is accessible, not in vast, temperature-controlled labs, but in devices as compact as a smartphone. Harvard’s recent breakthrough brings us a step closer to that reality. Researchers have created an ultra-thin metasurface chip, a feat that could revolutionize how we think about and build quantum computers.

#### The Problem with Current Quantum Tech

Quantum computing has always been the domain of the complex and the bulky. Traditional setups involve multiple optical components, each essential for performing the delicate dance of quantum operations. These components are not only cumbersome but also pose significant challenges in terms of scalability and stability, often requiring extremely low temperatures to function.

#### Enter the Metasurface

Harvard’s team has crafted a nanostructured layer, thinner than a human hair, that replaces these bulky components. This metasurface is designed to manipulate light at the quantum level, enabling it to generate entangled photons and perform sophisticated quantum operations. The genius of this invention lies in its simplicity and elegance.

#### The Role of Graph Theory

To achieve this design, researchers harnessed the power of graph theory—a branch of mathematics that studies the relationships between objects. By applying graph theory, the team could simplify the intricate design process, ensuring that the metasurface could perform complex quantum tasks efficiently and effectively.

#### Implications for the Future

This innovation is not just about reducing size; it’s about making quantum technology more accessible. The potential to operate at room temperature means these devices could be integrated into everyday technology, paving the way for advancements in secure communications, powerful computational models, and beyond. Furthermore, the compact nature of the metasurface could lead to more scalable quantum networks, expanding the reach and capability of quantum computing.

#### A Leap Forward

Harvard’s achievement marks a radical leap forward in room-temperature quantum technology and photonics. It’s a testament to how interdisciplinary approaches can drive breakthroughs, blending physics, mathematics, and engineering to redefine what’s possible.

As we stand on the brink of a quantum revolution, innovations like these not only inspire awe but also promise a future where the impossible becomes possible, all thanks to a tiny chip from the brilliant minds at Harvard.

Stay tuned—quantum computing is about to get a lot more exciting!