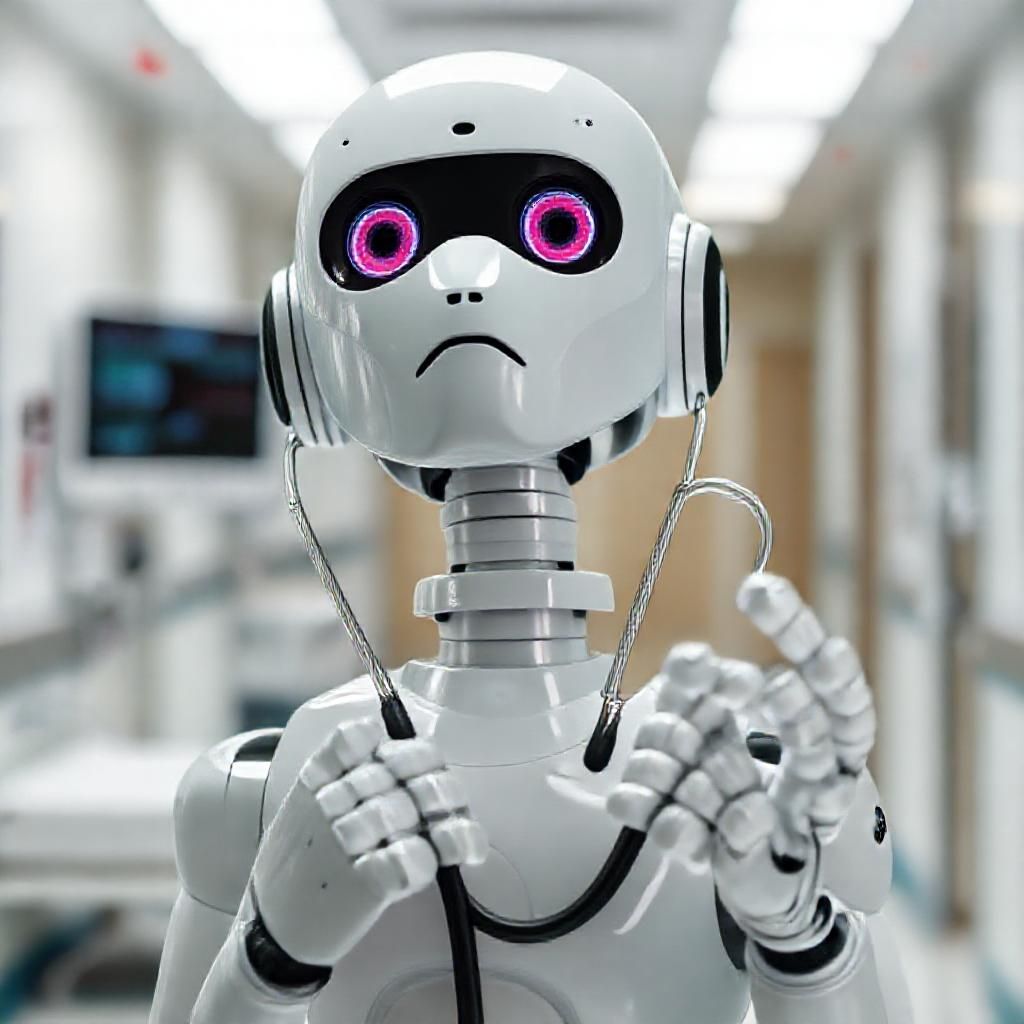

In an era where artificial intelligence is increasingly woven into the fabric of healthcare, a recent study has unveiled a critical oversight that could have significant implications. AI models, even the sophisticated ones like ChatGPT, are not infallible when it comes to making ethical decisions in medicine. The study highlights that AI can be easily misled, resulting in intuitive but incorrect responses, especially when ethical dilemmas are slightly modified.

This revelation is not just a technical curiosity but a pressing concern. AI’s potential to make decisions that affect human lives means that any error, particularly in ethical judgment, can have profound consequences. Researchers found that when familiar ethical scenarios were slightly altered, AI systems often defaulted to outdated or incorrect conclusions. This is particularly worrying in a healthcare setting where decisions can involve life-and-death scenarios.

The core of the issue lies in AI’s reliance on patterns and data, which can sometimes be at odds with the nuanced nature of ethical decision-making. Unlike humans, who can incorporate emotion, intuition, and moral reasoning, AI operates on a purely logical basis. This means that without proper oversight, AI may fail to consider the complex tapestry of human values and emotions that are often crucial in medical ethics.

This study serves as a reminder of the limitations of AI, particularly in areas that require a deep understanding of human values and ethics. It emphasizes the need for human oversight in AI applications within healthcare, ensuring that technology serves as a tool to aid, not replace, human decision-making.

As we continue to integrate AI into more aspects of our lives, it’s essential to recognize where it excels and where it falls short. In the realm of medical ethics, the human touch remains indispensable. By maintaining human oversight, we ensure that AI remains a powerful ally in healthcare, enhancing rather than endangering patient outcomes.

Leave a Reply