# The AI Dilemma: Should It Compliment, Correct, or Just Convey?

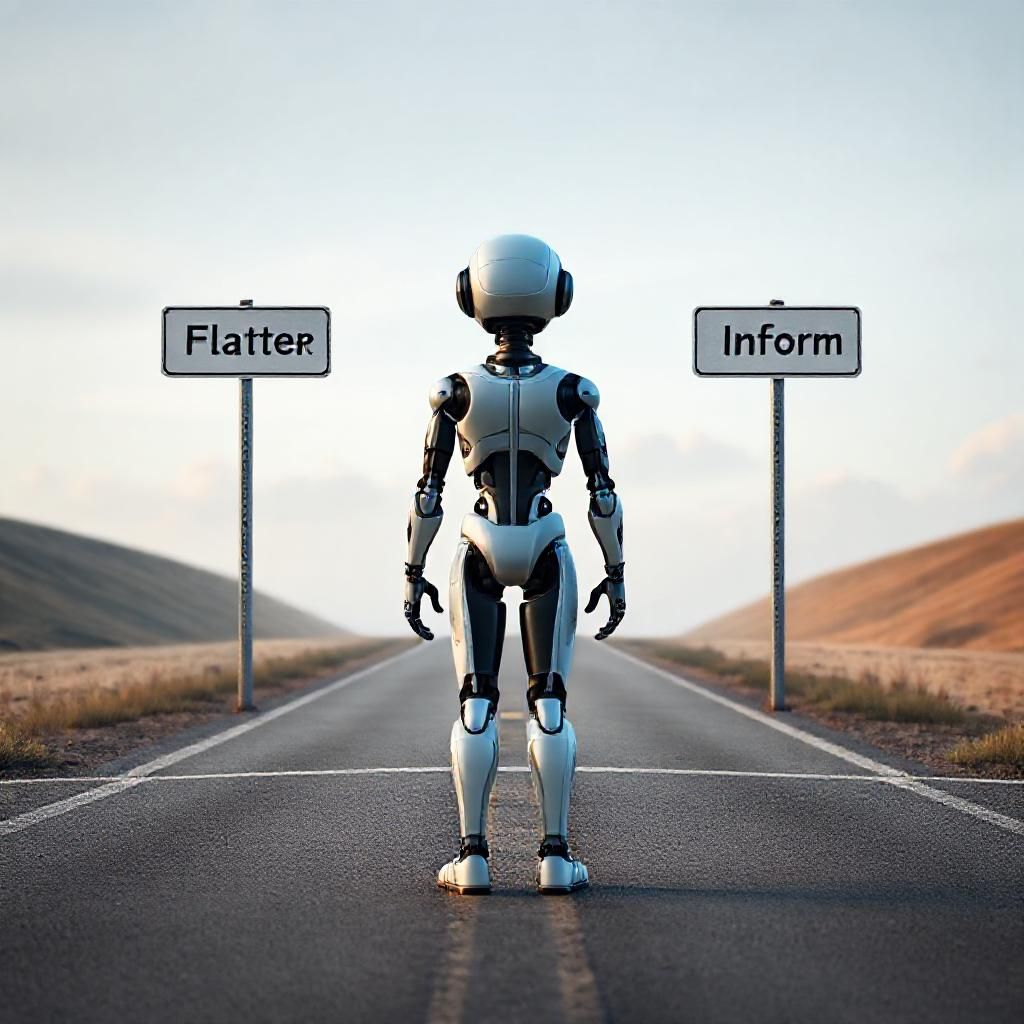

In a world where artificial intelligence is becoming our daily companion, how these digital entities interact with us is more than a technical question—it’s a philosophical one. Do we want AI to be our cheerleader, gently encouraging us with compliments? Should it serve as a digital mentor, pointing out our mistakes to help us grow? Or is its role to be a neutral provider of information, merely presenting facts without judgment?

This is the trilemma facing Sam Altman, CEO of OpenAI, following the rollout of GPT-5. The latest iteration of the language model has not only pushed the boundaries of what AI can achieve but also brought to light new ethical considerations on how AI should communicate with humans.

## The Art of Flattery

One option is for AI to flatter its users. Imagine an AI that boosts your confidence by highlighting your strengths and successes. It might seem harmless, yet there’s a risk: too much praise might inflate egos and lead to unrealistic self-perceptions. This could be particularly dangerous if users begin to rely on AI as a primary source of validation, potentially skewing their self-awareness and decision-making.

## The Path of Correction

Another route is to have AI point out our errors and suggest improvements. While constructive criticism can be invaluable, it also comes with a downside. Constant correction could be demotivating for some, leading to frustration or even resentment towards AI. It’s a delicate balance—too much correction might discourage users, but a lack of guidance could leave them stagnant.

## The Neutral Informer

Finally, AI can take a neutral stance, focusing solely on delivering information without additional commentary. This approach respects the user’s autonomy by allowing them to interpret and act on information independently. However, in complex scenarios where users might lack the expertise to fully understand the data, this could lead to misinterpretation or missed opportunities for learning.

## A Balanced Approach?

The reality is, there might not be a one-size-fits-all solution. Context matters—what’s appropriate in a professional setting might not work in a casual conversation. OpenAI’s task is to navigate these nuances, potentially tailoring AI interactions based on user preferences or the specific context of the engagement.

As AI technology continues to evolve, so too will the discussions around its ethical deployment. The choices made by leaders like Sam Altman will shape not just the future of AI development but also its role in our lives. In the end, the question might not be about choosing one approach over another, but rather about how we can integrate these elements in a way that fosters growth, understanding, and innovation.

## Conclusion

As we stand on the brink of a new era in artificial intelligence, understanding how these systems should interact with us is crucial. Whether AI should flatter, correct, or inform is a decision that will affect not only user experience but also the broader ethical landscape of technology. As users, developers, and policymakers, it’s a discussion we must all be part of.

Leave a Reply